Professor Andrej Cherkaev,

Department of Mathematics

Office: JWB 225

Email: cherk@math.utah.edu

Tel : +1 801 - 581 6822

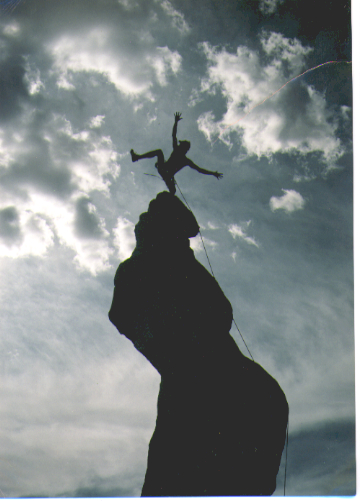

Search for the Perfection:

An image from

Bridgeman Art Library

Topics in Applied Math: Methods of Optimization

| Instructor:

Professor Andrej Cherkaev, Department of Mathematics Office: JWB 225 Email: cherk@math.utah.edu Tel : +1 801 - 581 6822 |

Search for the Perfection: An image from Bridgeman Art Library |

The course is designed for grad students in Math, Science, and Engineering. It covers algorithms and methods for search of extremum of functions of one or several variables such search methods as gradient, conjugate gradient, quasi-Newton; basics of linear, quadratic, and convex programming. We also discuss modeling, dealing with uncertainty in data, and optimization of large poorly defined objects. The students are encouraged to apply the methods to their research, course projects will be assigned.

Prerequisite: Calculus, Linear Algegra/ODE, elementary programming.

|

Optimization and aestetics

The inherent human desire to optimize is cerebrated in the famous Dante

quotation:

|

|

Optimization and Nature

The general principle by Maupertuis

proclaims:

|

| The essence of an optimization problem is: Catching

a black cat in a dark room in minimal time.

(A constrained optimization

problem corresponds to a room full of furniture.)

A light, even dim, is needed: Hence optimization methods explore assumptions about the character of response of the goal function to varying parameters and suggest the best way to change them. The variety of a priori assumptions corresponds to the variety of optimization methods. This variety explains why there is no silver bullet in optimization theory. |

|

Semantics

Optimization theory is developed by ingenious and creative people,

who regularly appeal to vivid common sense associations, formulating them

in a general mathematical form. For instance, the theory steers numerical

searches through

canyons

and passes (saddles), towards the

peaks;

it fights the curse of dimensionality,

models

evolution,

gambling,

and other human passions. The optimizing algorithms themselves are mathematical

models of intellectual and intuitive decision making.

Go to the top

Everyone who studied calculus knows that an extremum of a smooth function is reached at a stationary point where its gradient vanishes. Some may also remember the Weierstrass theorem which proclaims that the minimum and the maximum of a function in a closed finite domain do exist. Does this mean that the problem is solved? Introduction to Optimization

A small thing remains: To actually find that maximum. This problem is the subject of the optimization theory that deals with algorithms for search of the extremum. More precisely, we are looking for an algorithm to approach a proximity of this maximum and we are allowed to evaluate the function (to measure it) in a finite number of points. Below, some links to mathematical societies and group in optimization are placed that testify how popular the optimization theory is today: Many hundreds of groups are intensively working on it.

Go to the topOptimization and Modeling

The modeling of the optimizing process is conducted along with the optimization. Inaccuracy of the model is emphasized in optimization problem, since optimization usually brings the control parameters to the edge, where a model may fail to accurately describe the prototype. For example, when a linearized model is optimized, the optimum often corresponds to infinite value of the linearized control. (Click here to see an example) On the other hand, the roughness of the model should not be viewed as a negative factor, since the simplicity of a model is as important as the accuracy. Recall the joke about the most accurate geographical map: It is done in the 1:1 scale. Unlike the models of a physical phenomena, an optimization models critically depend on designer's will. Firstly, different aspects of the process are emphasized or neglected depending on the optimization goal. Secondly, it is not easy to set the goal and the specific constrains for optimization. Naturally, one wants to produce more goods, with lowest cost and highest quality. To optimize the production, one either may constrain by some level the cost and the quality and maximize the quantity, or constrain the quantity and quality and minimize the cost, or constrain the quantity and the cost and maximize the quality. There is no way to avoid the difficult choice of the values of constraints. The mathematical tricks go not farther than: "Better be healthy and wealthy than poor and ill". True, still not too exciting.The maximization of the monetary profit solves the problem to some extent by applying an universal criterion. Still, the short-term and long-term profits require very different strategies; and it is necessary to assign the level of insurance, to account for possible market variations, etc. These variables must be a priori assigned by the researcher. Sometimes, a solution of an optimization problem shows unexpected features: for example, an optimal trajectory zigzags infinitely often. Such behavior points to an unexpected, but optimal behavior of the solution. It should not be rejected as a mathematical extravaganza, but thought through! (Click here for some discussion.)

Go to the top

Basic rules for optimization algorithms

Go to the top This says that some properties of the maximized function be a priori assumed. Without assumptions, no rational algorithms can be suggested. The search methods approximate -- directly or indirectly -- the behavior of the function in the neighborhood of measurements. The approximation is based on the assumed smoothness or sometimes the convexity Various methods assume different types of the approximation. There is no smart algorithm for choosing the oldest person from an alphabetical telephone directory.

Generally, there are no ways to predict the behavior of the function everywhere in the permitted domain. An optimized function may have more than one local maximum. Most of the methods pilot the search to a local maximum without a guarantee that this maximum is also a global one. Those methods that guarantee the global character of the maximum, require additional assumptions as the convexity. My maximum is higher than your maximum!

Several classical optimization problems serve as testing grounds for optimization algorithms. Those are: maximum of an one-dimensional unimodal function, the mean square approximation, linear and quadratic programming.

Below, there are some comments and examples of optimization problems.

Sharp Maximum is achieved! The photo by and courtesy of Dr. Robert (Bob) Palais |

The sharpness of the maximum is of a special interest. It is very difficult to find a maximum of a flat function especially in the presence of numerical errors. For instance, a point of the maximum elevation in a flat region is much harder to determine than to locate a peak in the mountains. A sharp maximum (left figure) also requires some special methods since the gradient is discontinuous; it does not exist in the optimal point. |

| Structural

Optimization is an interesting and tricky class of optimization problem.

It is characterized by a large number of variables that represent the shape

of the design and and stiffness of the available materials.

The control is the layout of the materials in the volume of the structure. |

|

Go to Contents

Go to the top

Go to

Teaching Page

Go to my Homepage

NSF support is acknowledged.